Power Apps | Robotic Process Automation with AI Builder and UI flow (Part 3/3)

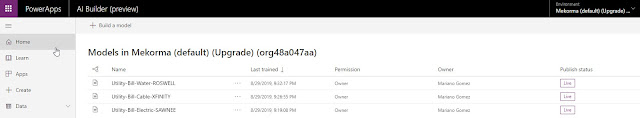

This is the final installment of a 3-part series vlog on Robotic Process Automation with Power Automate UI flows and Microsoft Dynamics GP. In this final episode, I am adding AI Builder capabilities to present an end-to-end solution that you can implement in your business *now*. This video shows how to combine the power of AI Builder form processing to read a sample utility bill and submit it to your legacy ERP system. The objective here is to show yet another integration mechanism to get data into another application and in no way this video is intended to suggest you replace your current integration processes by what you are seeing here. However, it does open the door to consider modernizing the tools you have in house with the flexibility of the Power Platform. My previous videos in this series can be found here: Robotic Process Automation with Microsoft Dynamics GP and UI flow (Part 1/3) - Click here Robotic Process Automation with Microsoft Dynamics GP and UI flow ...